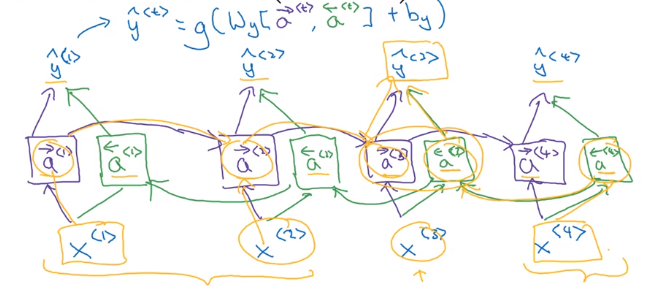

Because of that, RNNs can take one or multiple input vectors and produce one or multiple output vectors. Recurrent neural networks are used to model sequential data with the time step index t, and incorporate the technique of context vectorizing.Ĭontext vectoring acts as “memory” which captures information about what has been calculated so far, and enables RNNs to remember past information, where they’re able to preserve information of long and variable sequences. What are recurrent neural networks (RNNs)? In the next section, we will learn about RNNs and how they use context vectorizing to predict the next word. So far we have seen what sequential data is and how to model it. Context is preserved in short sentences or sequences.It can learn hence differentiable (backpropagation).Can operate in variable length of sequences.The advantages of context vectorizing are: Once we find the context vector h, we can then use a second function g which produces a probability distribution. Reduces the scalability issue by a small scaleĬontext vectorizing is an approach where the input sequence is summarized to a vector such that that vector is then used to predict what the next word could be.į θ summarizes the context in h such that:.Context of the sentence is lost if the sentence is long.

This approach reduces the scalability issue, but not completely. The same sentence “ Cryptocurrency is the next big _” can now have a range of options to choose from. Modeling p(x|context)Īlthough we could still modify the same model by introducing conditional probability, assuming that each word is now dependent on every other word rather than independent, we could now model the sequence in the following way: p(x T) = p(x T | x 1…., x T-1). In our example, the probability of the word “ the ” is higher than any other word, so the resultant sequence will be “ The the the the the the”. Why?īecause the probability of any particular word can be higher than the rest of the word. The above model can be described in a formula:Įach word is given a timestamp: t, t-1, t-2, t-n, which describes the position of an individual word.īut, it turns out that the model described above does not really capture the structure of the sequence. Once we know the probability of each word (from the corpus), we can then find the probability of the entire sentence by multiplying individual words with each other.įor instance, if we were to model the sentence “ Cryptocurrency is the next big thing”, then it would look something like this: During training, the model learns to map the input with the output by approximating a value closer to the original value. So, how do we build deep learning models that can model sequential data? Modeling sequential data is not an easy task.įor instance, when it comes to modeling a supervised learning task, our approach is to feed the neural network with a pair of input (x) and output (y). They can deal with long sequences of data, but are limited by the fact that they can’t order the sequence correctly. The feed-forward network works well with fixed-size input, and doesn’t take structure into account well.Ĭonvolutional neural networks, on the other hand, were created to process structures, or grids of data, such as an image. For instance, if sequential data is fed through a feed-forward network, it might not be able to model it well, because sequential data has variable length. Sequence data is difficult to model because of its properties, and it requires a different method. Length of data varies (potentially infinitely).It follows order (contextual arrangement).Modeling sequence data is when you create a mathematical notion to understand and study sequential data, and use those understandings to generate, predict or classify the same for a specific application. Sequence modeling is a task of modeling sequential data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed